|

STUDENT DIGITAL NEWSLETTER ALAGAPPA INSTITUTIONS |

|

Faridali Rashji, MD, FRCPC

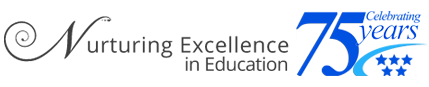

Perhaps the most interesting aspect of ion exchange membranes in this in the test solution blood pressure chart per age purchase lopressor 25mg line. Additionally blood pressure medication makes me feel weird discount lopressor 25mg with amex, the ion exchange membrane itself can be used as a preconcentration matrix for subsequent determinations by activation analysis blood pressure medication helps ed generic 12.5 mg lopressor free shipping, emission blood pressure regulation lopressor 12.5mg discount, or absorption spectrophometry. The main problem for transition and associated with using cation-exchange in natural membranes is heavy metals is waters and wastewaters their al- lack of specificity. This particularly significant since the alkalies and kaline earths are usually present in great excess. Another limitation to the use of cation-exchange membranes for natural waters and wastewaters is the fact that most metals are found in the form of inorganic or organic complexes. The separation of such complexed metal ions by ion exchange is sometimes not possible unless the complex is first disrupted. Valuable schemes for the separation of a large number of metal ions by successive employ different complexing agents and organic solvents. Selection of an appropriate solvent-extraction system depends on its specificity and its suitability for subsequent analytical procedures. Partial freezing has been used for the concentration of Fe, Cu, Zn, Mn, Pb, Ni, Ca, Mg, and K in water samples in concentrations ranging from 0. Alkali metals (K, Ca and Mg) concentrate best at low pH while heavy metal cations (Pb, Ni and Cu) concentrate best under alkaline conditions [54] Analytical techniques. Nevertheless, the analyst can rely on a number of techniques which offer some of the above advantages. A listing of some of the applicable analytical procedures and their sensitivity limits is given in Table 10. Exceptions are those few cases in which unfavorable matrix components are present in the sample solution. This is largely a result of the presence of certain compounds which combine with the metal under analysis form relatively nonvolatile compounds which do not break down in the flame. Matrix effects may be minimized by separation or by adding measurement, approximately the same amount of matrix component to the standard solutions. In contrast to flame photometry, there in is very little interelement interthis is ference in atomic absorption spectrophotometry. Also, while sensitivity flame photometry is critically dependent on flame temperature, readily not the case for atomic absorption spectrophotometry. This sensitivity can be vastly increased to the part it per billion range by scale expansion or by extracting the metals in a non- aqueous solvent and spraying ties into the flame. Microgram per liter quanti- of cobalt, copper, iron, lead, nickel, and zinc have been determined in saline waters by extraction of metal complexes with ammonium pyr- rolidine dithiocarbamate into ganic solvents may alter the methyl isobutyl ketone [56]. An increase of about 60 percent in the atomizer efficiency can be achieved with the use Although atomic absorption spectrophotometry is a relatively new it is being appUed widely for analysis of metal ions in natural waters and wastewaters. In addition to its selectivity and sensitivity, atomic absorption spectrophotometers are easy to operate and maintain. Atomic fluorescence spectrophotometry offers no increase in selectivity over atomic absorption spectrophotometry since the latter is virtually specific for each element. It is possible, however, to increase the sensitivity of measurements with atomic fluorescence spectrophotometry by increasing the intensity of irradiation, or by increasing the amplification until the system becomes noise limited [57]. For absorption spectrophotometry, the absorbance, /4, is directly related to the concentration of the absorbing species, C, the length of the light path through the absorbing solution, / and the molar absorptivity of the absorbing species, e; i. However, for fluorescence spectrophotometry, any increase in /<, will be matched by a corresponding increase in the analytical signal F, as indicated in equation (12). Also, any increase in the amplifier gain in absorption spectrophotometry will amplify lo and / correspondingly, whereas in fluorescence spectrophotometry, this will result in an increase in F. For these reasons the sensitivity of It is that fluorescence spectrophotometry tion spectrophotometry. Application of activation analysis to natural waters and wastewaters generally requires radiochemical separa- sample and comparison of the activities of the unknown sample and of a known mass of standard treated under identical conditions. Characterization of elements in a given water sample may be done by identifying type, energy, and half life of emission. The concentration of a given element in a sample is determined by quantizing its characteristic tion of the emission, /. A wide range of separation techniques have been associated with activation analysis procedures, some of which have been automated effectively [60]. Considerable improvement in the resolution of individual peaks in spectra has been accomplished by replacement of sodium iodide detectors with germanium detectors for gamma-ray scintillation spectrometry. Detailed procedures for activation analysis of natural waters and wastewaters have been reported [61-63]. Principal errors in analy- are due primarily to self shielding, unequal flux at the sample and stan- the problem of identifying the components dard positions, inaccurate counting procedures and counting statistics. Computers may be used also to determine optimum conditions for irradiation of particular samples. Some of the main advantages of activation analysis are: (a) its very high sensitivity, (b) the rapidity of analysis, and (c) the nondestructive nature of the test. Although activation analysis offers the high sensitivity required for trace metal analysis, its use is limited by the availability of reactor facili- ties; furthermore, it is an elemental analysis procedure that offers no information about oxidation state or degree and type of metal complexation. This is surprising since electroanalytical techniques, which are number of unique advantages not shared by other methods of analysis. Generally speaking, the main advanmost suitable for metal analysis, offer a tages offered by electroanalytical procedures are (a) ability of species characterization, (b) in situ analysis, (c) suitability for field operation. A summary of some of the more important basic cal relationships underlying electroanalyti- procedures is given in Table 1 1 Polarographic Techniques. Howby means of preconcentration techniques it may be possible to extend the sensitivity range significantly. Preconcentration by ion the dropping ever, exchange, freeze drying, evaporation, or electrodialysis may be used. A may significant problem is in the application of classical polarography to sur- natural wastewaters the effect of interferences by electroactive and face active impurities. Such impurities, frequently present in wastewaters, interfere with electrode reaction processes and cause a suppresssion and/ or a shift of the polarographic wave [67] Modifications of polarographic techniques, such as "differential polarography" and "derivative polarography," may be used to increase the sensitivity and minimize the effect of interferences [68]. Pulse polarography has the advantage of extending the sensitivity of determination to approximately 10-^M. The technique is based on the application of short potential pulses of 50 ms on either a constant or gradually increasing background voltage. Following application of the pulse, current measurements are usually done after the spike of charging current has decayed. The limiting current in pulse polarography is larger than in classical polarography. Derivative pulse polarography, which is based on superimposing the voltage pulse upon a slowly changing potential (about ImV/s) and recording the difference in current between successive drops versus the potential, is even more sensitive than pulse polarography [68] Cathode-ray polarography or oscillographic polarography has been used for analysis of natural waters and wastewaters, with a sensitivity of 10-W being reported [69-71]. This technique involves the use of a cathode-ray oscilloscope to measure the current-potential curves of applied (saw-tooth) potential rapid sweeps during the life-time of a single mercury drop. Oscillographic polarography has the advantages sensitivity, (b) of: (a) relatively high high resolution, and (c) rapidity of analysis. One of the most useful electrochemical approaches to metal analysis in trace quantities is anodic stripping voltammetry. This technique involves two consecutive steps: (a) the electrolytic separation and concentration of the electroactive species to form a deposit or an amalgam on the working electrode, and (b) the - dissolution (stripping) of the deposit. This can be done by performing the pre-electrolysis step under carefully controlled conditions of potential, time of electrolysis, and hydrodynamics of the solution. The stripping step is usually done in an unstirred solution by applying a potential- either constant or varying linearly with time - of a magnitude sufficient to drive the reverse electrolysis reactions. Quantitative deter- minations are done by integrating the current-time curves (coulometry controlled potential) or by measurement of the peak current (chronoamperometry with potential sweep). Greater sensitivity has been achieved by use of electrodes which consist of thin films of mercury on a substrate of either platinum, silver, nickel, or carbon [75]. Errors due to non-faradaic capacitance current components can be minimized by proper choice of at stripping technique.

The Authority has been given a wide brief heart attack mike d mixshow remix cheap lopressor 50 mg fast delivery, so that it can cover all stages of food production and supply blood pressure 5 year old boy cheap lopressor 12.5 mg fast delivery, from primary production to the safety of animal feed arteria nutricia purchase 12.5mg lopressor fast delivery, right through to the supply of food to consumers 01 heart attackm4a proven lopressor 12.5mg. It gathers information from all parts of the globe, keeping an eye on new developments in science. It shares its findings and listens to the views of others through a network (advisory forum) that will be developed over time, as well as interacting with experts and decision-makers on many levels. The Authority carries out assessments of risks to the food chain and indeed can carry out scientific assessment on any matter that may have a direct or indirect effect on the safety of the food supply, including matters relating to animal health, animal welfare, and plant health. In keeping with the principle of subsidiarity, Community action in the field of public health is designed to fully respect the responsibilities of the Member States for the organization and delivery of health services and medical care. In 2000 the European Commission adopted a Communication on the Health Strategy of the European Community. The Strat- egy encompasses work not only in the health sector but across all policy areas. In the nutrition arena, the scientific community has estimated that an unhealthy diet and a sedentary lifestyle might be responsible for up to one-third of the cases of cancers, and for approximately one-third of premature deaths due to cardiovascular disease in Europe. In terms of nutrition the two main objectives are to collect quality information and make it accessible to people, professionals, and policy-makers, and to establish a network of Member State expert institutes to improve dietary habits and physical activity habits in Europe. The long-term objective is to work toward the establishment of a coherent and comprehensive community strategy on diet, physical activity, and health, which will be built progressively. It will include the mainstreaming of nutrition and physical activity into all relevant policies at local, regional, national, and European levels and the creation of the necessary supporting environments. At Community level, such a strategy would cut across a number of Community policies and needs to be actively supported by them. It would also need to actively engage all relevant stakeholders, including the food industry, civil society, and the media. Finally, it would need to be based on sound scientific evidence showing relations between certain dietary patterns and risk factors for certain chronic diseases. The European Network on Nutrition and Physical Activity, which the Commission established, will give advice during the process. In December 2005 the Commission published 304 Introduction to Human Nutrition a Green Paper called "Promoting healthy diets and physical activity: a European dimension for the prevention of overweight, obesity and chronic diseases. Nutrition is clearly recognized as having a key role in public health and, together with lifestyle, has a central position within the strategy and actions of the Community in public health. The bedrock of sensible nutrition regulation planning is the availability of good data and the intelligent use of that data to both inform and challenge the policymakers. Globalization of the food supply has been accompanied by evolving governance issues that have produced a regulatory environment at the national and global level led by large national, and international agencies in order to facilitate trade and to establish and retain the confidence of consumers in the food supply chain. In this context, nutrition research involves advances in knowledge concerning not only nutrient functions and the short- or long-term influences of food and nutrient consumption on health, but also studies on food composition, dietary intake, and food and nutrient utilization by the organism. The design of any investigation involves the selection of the research topic accompanied by the formulation of both the hypotheses and the aims, the preparation of a research protocol with appropriate and detailed methods and, eventually, the execution of the study under controlled conditions and the analysis of the findings leading to a further hypothesis. These stages of a typical research program are commonly followed by the interpretation of the results and subsequent theory formulation. Other important aspects concerning the study design are the selection of statistical analyses as well as the definition of the ethical commitments. This chapter begins with a review of some of the important issues in statistical analysis and experimental design. The ensuing sections look at in vitro techniques, animal models, and finally human studies. This section is intended to give students some of the very basic concepts of statistics as it relates to research methodology. Validity Validity describes the degree to which the inference drawn from a study is warranted when account is taken of the study methods, the representativeness of the study sample and the nature of its source population. External validity refers to the extension of the findings from the sample to a target population. Accuracy Accuracy is a term used to describe the extent to which a measurement is close to the true value, and 306 Introduction to Human Nutrition Figure 13. Reliability Reliability or reproducibility refers to the consistency or repeatability of a measure. A reliable measure is measuring something consistently, but not necessarily estimating its true value. If a measurement error occurs in two separate measurements with exactly the same magnitude and direction, this measurement may be fully reliable but invalid. The kappa inter-rate agreement statistic (for categorical variables) and the intraclass correlation coefficient are frequently used to assess reliability. Precision Precision is described as the quality of being sharply defined or stated; thus, sometimes precision is indicated by the number of significant digits in the measurement. In a more restricted statistical sense, precision refers to the reduction in random error. It can be improved either by increasing the size of a study or by using a design with higher efficiency. Sensitivity and specificity Measures of sensitivity and specificity relate to the validity of a value. Specificity is the proportion of persons without the condition who are correctly classified as being free of the condition by the test or criteria. Sensitivity reflects the proportion of affected individuals who test positive, while specificity refers to the proportion of nonaffected individuals who test negative (Table 13. Data description Statistics may have either a descriptive or an inferential role in nutrition research. Descriptive statistical methods are a powerful tool to summarize large amounts of data. These descriptive purposes are served either by calculating statistical indices, such as the mean, median, and standard deviation, or by using graphical procedures, such as histograms, box plots, and scatter plots. Some errors in the data collection are most easily detected graphically with the histogram plot or with the box-plot chart (box-andwhisker plot). These two graphs are useful for describing the distribution of a quantitative variable. Nominal variables, such as gender, and ordinal variables, such as educational level, can be presented simply Nutrition Research Methodology 307 tabulated as proportions within categories or ranks. Continuous variables, such as age and weight, are customarily presented by summary statistics describing the frequency distribution. These summary statistics include measures of central tendency (mean, median) and measures of spread (variance, standard deviation, coefficient of variation). The standard deviation describes the "spread" or variation around the sample mean. Hypothesis testing the first step in hypothesis testing is formulating a hypothesis called the null hypothesis. This null hypothesis can often be stated as the negation of the research hypothesis that the investigator is looking for. In a second step, we calculate the probability that the result could have been obtained if the null hypothesis were true in the population from which the sample has been extracted. The p-value is a conditional probability: p-Value = prob(differences differences found null hypothesis (H0) were true) where the vertical bar () means "conditional to . The p-value by no means expresses the probability that the null hypothesis is true. Hypothesis testing helps in deciding whether or not the null hypothesis can be rejected. This probability is the p-value; the smaller it is, the stronger is the evidence to reject the null hypothesis. The use of such a cut-off for p leads to treating the analysis as a decision-making process. A type I error consists of rejecting the null hypothesis, when the null hypothesis is in fact true. Power calculations the power of a study is the probability of obtaining a statistically significant result when a true effect of a specified size exists.

Buy lopressor 12.5 mg with amex. 100 Important Simple GK (General Knowledge) Quizzes with Questions & Answers for Kids and Students.

Colonization of the nasopharynx or pharynx can persist asymptomatically for months heart attack vs panic attack lopressor 50mg lowest price. Household contact with a meningococcal disease pt or a meningococcal carrier blood pressure categories purchase 50mg lopressor overnight delivery, household or institutional crowding blood pressure quit smoking 12.5 mg lopressor visa, exposure to tobacco smoke arrhythmia nutrition discount lopressor 50mg without prescription, and a recent viral upper respiratory infection are risk factors for colonization and invasive disease. Pathogenesis Meningococci colonize the upper respiratory tract, are internalized by nonciliated mucosal cells, enter the submucosa, and reach the bloodstream. If bacterial multiplication is slow, the bacteria may seed local sites such as the meninges. Morbidity and mortality from meningococcemia have been directly correlated with the amount of circulating endotoxin, which can be 10- to 1000-fold higher than levels seen in other gram-negative bacteremias. Deficiencies in antithrombin and proteins C and S can occur during meningococcal disease, and there is a strong negative correlation between protein C activity and mortality risk. Antibodies to serogroup-specific capsular polysaccharide constitute the major host defense. Protective antibodies are induced by colonization with nonpathogenic bacteria possessing cross-reactive antigens. Rash: erythematous macules, primarily on the trunk and extremities, that become petechial and-in severe cases-purpuric and may coalesce into hemorrhagic bullae that necrose and ulcerate 3. Long-term morbidity includes loss of skin, limbs, or digits from ischemic necrosis and infarction. Chronic meningococcemia is a rare syndrome of episodic fever, rash, and arthralgias lasting for weeks to months. If treated with steroids, this condition may become fulminant or evolve into meningitis. Petechial or purpuric skin lesions help distinguish this form of bacterial meningitis from other types. Diagnosis Definitive diagnosis relies on isolation of the organism from normally sterile body fluids. Pts with fulminant meningococcemia need aggressive supportive therapy that can include vigorous fluid resuscitation, elective ventilation, pressor agents, fresh-frozen plasma (in pts with abnormal clotting parameters), and supplemental glucocorticoid treatment (hydrocortisone, 1 mg/kg every 6 h) for impaired adrenal reserve. If the platelet count is <50,000/L or if there is active bleeding, activated protein C should not be given. Receipt of antibiotics prior to hospital admission has been associated with a better outcome in some studies. The conjugate vaccine has increased immunogenicity and probably confers longer-duration protection than the previously available polysaccharide vaccine. In an outbreak setting in developing countries: Long-acting chloramphenicol in oil suspension (Tifomycin), single dose Adults: 3. The organism is a nonsporulating, gram-positive rod that demonstrates motility when cultured at low temperatures. Listeria can be found in processed and unprocessed foods such as soft cheeses, delicatessen meats, hot dogs, milk, and cold salads. Clinical Features Listeria causes several clinical syndromes, of which meningitis and septicemia are most common. Listeriosis should be considered in outbreaks of gastroenteritis when cultures for other likely pathogens are negative. Pts have fever and headache followed by asymmetric cranial nerve defects, cerebellar signs, and hemiparetic/hemisensory defects. Pts are usually bacteremic and present with a nonspecific febrile illness that includes myalgias/arthralgias, backache, and headache. Overwhelming listerial fetal infection-granulomatosis infantiseptica-is characterized by miliary microabscesses and granulomas, most often in the skin, liver, and spleen. Diagnosis Timely diagnosis requires that the illness be considered in groups at risk: pregnant women, elderly pts, neonates, immunocompromised pts (e. Of live-born treated neonates in one series, 60% recovered fully, 24% died, and 13% were left with sequelae or complications. Prevention Pregnant women and other persons at risk for listeriosis should avoid soft cheeses and should avoid or thoroughly reheat ready-to-eat and delicatessen foods. Use of Hib conjugate vaccine has dramatically decreased rates of Hib colonization and invasive disease. Meningitis is associated with high morbidity; 6% of pts have sensorineural hearing loss; one-fourth have some significant sequelae; mortality is ~5%. Epiglottitis, which occurs in older children and occasionally in adults, involves cellulitis of the epiglottis and supraglottic tissues that begins with a sore throat and progresses rapidly to dysphagia, drooling, and airway obstruction. Miscellaneous: childhood otitis media, puerperal sepsis, neonatal bacteremia, sinusitis, and-less commonly-invasive infections (e. Many agents are useful: amoxicillin/ clavulanate, extended-spectrum cephalosporins, newer macrolides (azithromycin or clarithromycin), and fluoroquinolones (in nonpregnant adults). Prevention Hib vaccine is recommended for all children; the immunization series should be started at ~2 months of age. All children and adults (except pregnant women) in households with a case of Hib disease and at least one incompletely immunized contact <4 years of age should receive prophylaxis with oral rifampin. In households, attack rates are 80% among unimmunized contacts and 20% among immunized contacts. Pertussis remains an important cause of infant morbidity and death in developing countries. In the United States, the incidence has increased slowly since 1976, particularly among adolescents and adults. Respiratory infections should be treated with a 5-day course and sinusitis for longer durations. Several Haemophilus species, Actinobacillus actinomycetemcomitans, Cardiobacterium hominis, Eikenella corrodens, and Kingella kingae make up this group. It is associated with severe destructive periodontal disease, which also is frequently evident in pts with endocarditis. Native-valve endocarditis should be treated for 4 weeks and prosthetic-valve endocarditis for 6 weeks. The organism is usually pan-sensitive, but high-level penicillin resistance has been reported. The organism is typically resistant to clindamycin, metronidazole, and aminoglycosides. Haemophilus species, Actinobacillus actinomycetemcomitans Cardiobacterium hominis 512 Eikenella corrodens Kingella kingae aSusceptibility bFluoroquinolones Ceftriaxone (2 g/d) or fluoroquinolonesb Fluoroquinolonesb testing should be performed in all cases to guide therapy. Escherichia coli and, to a lesser degree, Klebsiella and Proteus account for most infections. Combination empirical antimicrobial therapy may be appropriate pending susceptibility results. Osteomyelitis, particularly vertebral, is more common than is generally appreciated; E. Currently, cephalosporins (particularly second-, third-, and fourth-generation agents), monobactams, piperacillin-tazobactam, carbapenems, and aminoglycosides retain good activity. However, testing for Shiga toxins or toxin genes is more sensitive, specific, and rapid.

Section 104 states: "The Administrator may conduct and accelerate R/D of low cost instrumentation techniques to facilitate determination of quantity and quality of air pollution emis- sions hypertension portal buy lopressor 25mg with amex, including hypertension nih buy 50mg lopressor with visa, but not limited to blood pressure pills kidney failure buy generic lopressor 100mg on line, automotive emissions blood pressure tracking chart printable buy cheap lopressor 12.5 mg on line. The pace of these It was ment of has accelerated slowly but significantly since the passage of these 1967 clear Amendments. Emphasis was placed on the development of a new generation of instruments using physical principles rather than wet air quality chemistry. Evaluation of instrumentation for measuring emissions from stationary sources has recently been initiated in accordance with provisions of the 1970 Clean Air Act. Section 110 (2F), on implementation plans, states that approval of such plans shall include, among other aspects, "require- equipment by owner or operators of stationary sources to monitor emission. A list of important areas of need for air pollution measurement capability are listed in Table 1. The listing is divided among air quality, motor vehicle emission, and stationary source requirements. The order within each area proceeds from research on pollutant composition and concentration, as related to atmospheric characteristics and biological effects, to carrying out provisions of Section 10, 1 1 1, regulatory needs. Air pollution program elements requiring instrumentation and measurements development, evaluation, and standardization. However, the Environmental Protection Agency will soon outrun this reservoir of measurement capability when it begins impending regulatory programs. It is essential that measuring requirements for R/D projects be supported now, or the measuring techniques will not be available for regulatory requirements later. The complexity of the process and the timing involved will be considered in greater detail later in this discussion. For monitoring air pollution, the ability to measure 10 to 1000 /jLglm^ is required. It appears that at stationary source appHcations the concentration range will be 100 to 1000 times higher. It follows that an instrument may have more than satisfactory sensitivity for monitoring stationary source emissions, but totally inadequate sensitivity for measuring ambient air quality. Response times of 1 second or less are needed for for other some motor vehicle applications, whereas periodic analyses once every 5 or 10 minutes, or even less frequently, are required air pollution measurements. Fortunately, it is possible to develop instruments with appropriate characteristics for the entire range of applications. Such an approach will tend to minimize instrument development costs, reduce problems of reliable commercialization of instrumentation, and speed standardization and collaborative testing programs. This approach must also produce instruments adequate for each of the applications listed in Table 1 at a reasonable cost per instrument. A sequence of activities required to develop and commercialize an instrument is given in Figure 1 In many past and some present instrument. Final design activities f Commercial production of instrument Col Applications Figure 1. Sequence of activities in developing, evaluating and standardizing air pollution monitoring instrumentation. The net result has been either unusable instruments or expenditure of much work in rebuilding instruments. Aside from the cost and inefficiency involved in them, such abbreviated approaches actually prove more time consuming than an approach that systematically follows through on the necessary steps as listed. As indicated in the time scale, it is most unusual for this sequence to take less than 2 to 2-1/4 years; the sequence can require more than 3 years if research problems occur early in the process or if prototypes must be redesigned. Unless they are developed for R/D projects, prototype instruments will not ment of a be available when required for regulatory standards. Cumbersome and often inadequate manual analytical methods must be substituted, to the discomfort of all, while the overdue instrumentation is being developed. Instrumentation for Measuring Atmospheric Gases and Vapors this discussion will be concerned primarily with the present status of and anticipated needs for instruments that can monitor ambient air. Point sampling instrumentation, along with remote and long-path-type instrumentation, will be considered for the monitoring of gases. Ambient air monitoring needs will be considered to include not only determination of urban pollution, but measurement of pollution on a regional and global scale. Because a number of atmospheric substances of concern do not result from primary emissions, but from chemical reactions, atmospheric transformations must be considered. Methods of analysis and instrumentation available up to about 1966 have received detailed consideration in a number of reviews [1-7]. Our first concern is with the justification for the development of new or improved instrumentation. It will be necessary to consider the deficiencies of instruments to analyze ambient air that have been available until recently. Many of the individual types of instruments are cumbersome, of low specificity, of limited sensitivity, and difficult to maintain because of complexities of design. Some of the instruments suffer from only one or two deficiencies, while others fail in almost all aspects. As a result, the amount of valid measurement data obtained has frequently been limited. Attempts were made to improve some of these instruments with respect to specificity of response for ozone [8] and hydrocarbons [9,10]. Such activities were viewed as temporary expedients necessary because of the lack of funds to develop new instruments. The approach usually taken was to utilize a substrate in the inlet system of the instrument which would remove or hold back interfering substances. Experience has shown that such systems do not work very effectively in routine monitoring operations, although they can be handled successfully by R/D personnel in field studies. No R/D activity can anticipate all of the problems associated with operations under a variety of routine conditions in the field. Variability in time to partial or complete breakthrough of the interferences through the substrate under varying atmospheric conditions is one of the problems often experienced. In general, instruments that require use of auxiliary clean-up systems to achieve specificity are more prone to give incorrect results than instruments whose basic sensing principles confer the required specificity. As a result, few concerned persons have expressed satisfaction little with instruments available during the last 10 or 15 years in monitoring networks. Unfortunately, ble to incentive and fewer resources were availa- remedy the situation until recently. Although the resources available in the last several years have been modest, considerable progress has been made, particularly with respect to new or improved instruments for measuring inorganic gases. Instruments have been developed which are now receiving or have received field evaluation for sulfur oxides, ozone, carbon monoxide, nitric oxide, and nitrogen dioxide. In addition, a better technique for determination of non- methane hydrocarbon has been developed. Most of these instrument developments have utilized sensors or laboratory equipment produced by research in recent years. Sensors had to be evaluated with respect to sensitivity and specificity for urban air pollution requirements. Field studies have been required to permit comparisons of instruments used for each of the several pollutants under representative ambient air conditions. Such investigations, from laboratory evaluation of the potential for applicability of a sensor to air pollution needs through field evaluation, require several years of continuing efforts. The gas chromatographic technique for carbon monoxide and methane former years in laboratory photochemical studies [11-13] was developed into a convenient monitoring instrument [14,15]. In combination with a capability for measuring total hydrocarbons, such a gas chromatographic analyzer provides a highly specific and sensitive means of analyzing carbon monoxide and nonmethane hydrocarbons over a wide range of atmospheric concentrations. As a carbon monoxide analyzer, the gas chromatograph is much more sensitive and specific than current nondispersive carbon monoxide analyzers. This approach can also be extended to include monitoring for other gaseous hydrocarbons. The direct utilized in methane is much more desirable than the earlier attempt to use substrates to remove hydrocarbons other than methane [9,10]. This analysis for type of technique has given erratic results in routine monitoring activities because of the care necessary to maintain the characteristics of the substrate utilized to provide the specificity required. Another technique developed for methane and other hydrocarbons involves selective combustion, with subsequent detection by a water sorption sensor [16]. The "oxidant" has no exact meaning since the response obtained depends on the presence of various interfering substances.